极速搭建K8s集群:5步搞定生产环境部署

访问地址:https://NodeIP:30001注意:这里必须是https的方式,如果谷哥浏览器不能访问,谷哥有的版本没有添加信任的地方,无法访问,可使用firefox或者其它浏览器。本次实验三台机器IP地址:192.168.234.131,192.168.234.132,192.168.234.133。4.3 安装kubeadm,kubelet和kubectl。4.1 安装containerd

·

3. 准备环境

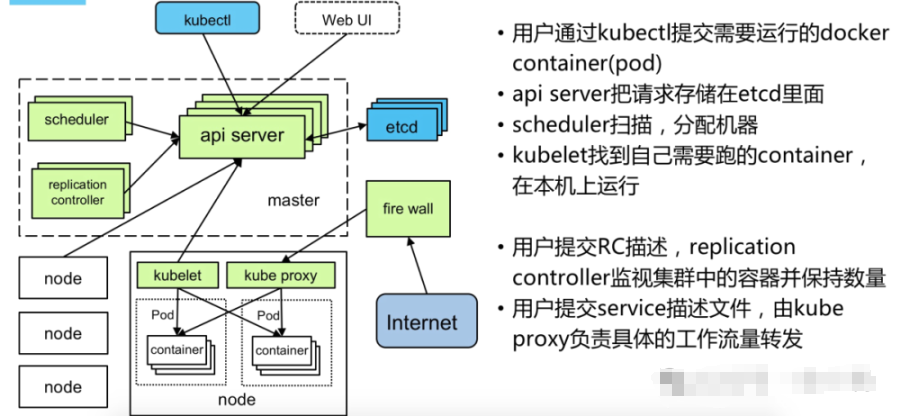

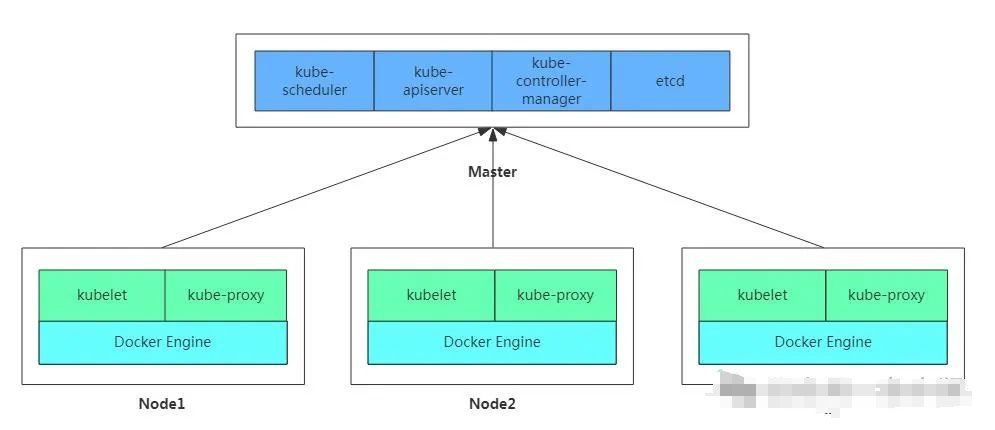

Kubernetes 架构图

所有机器操作:

本次实验三台机器IP地址:192.168.234.131,192.168.234.132,192.168.234.133

关闭防火墙:

$ systemctl stop firewalld

$ systemctl disable firewalld

关闭selinux:

$ sed -i 's/enforcing/disabled/' /etc/selinux/config

$ setenforce 0

关闭swap:

$ swapoff -a $ 临时

$ vim /etc/fstab $ 永久

注释 有"swap"的一列

$ swapoff -a && sed -i '/ swap / s/^/#/' /etc/fstab

添加主机名与IP对应关系(记得设置主机名):

$ cat /etc/hosts

192.168.234.131 k8s-master

192.168.234.132 k8s-node1

192.168.234.133 k8s-node2

将桥接的IPv4流量传递到iptables的链:

$ cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

# 加载br_netfilter模块

$ modprobe br_netfilter

# 查看是否加载

$ lsmod | grep br_netfilter

# 生效

$ sysctl --system

#时间同步

$ yum install ntpdate -y

$ ntpdate time.windows.com

4.1 安装containerd

安装需要的软件包, yum-util 提供yum-config-manager功能

$ yum -y install yum-utils

Containerd软件包及依赖存放于Docker仓库

$ yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

查看是否添加成功

$ ls /etc/yum.repos.d/docker-ce.repo

container.io 需要依赖 container-selinux 包,因此需要配置CentOS 仓库

$ wget -O /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo

$ sed -i 's/\$releasever/7/g' CentOS-Base.repo

安装Containerd

$ yum -y install containerd.io

启动并设置开机自启

$ systemctl enable containerd

查看版本:

$ containerd -v

4.2 添加阿里云YUM软件源

$ cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=1

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

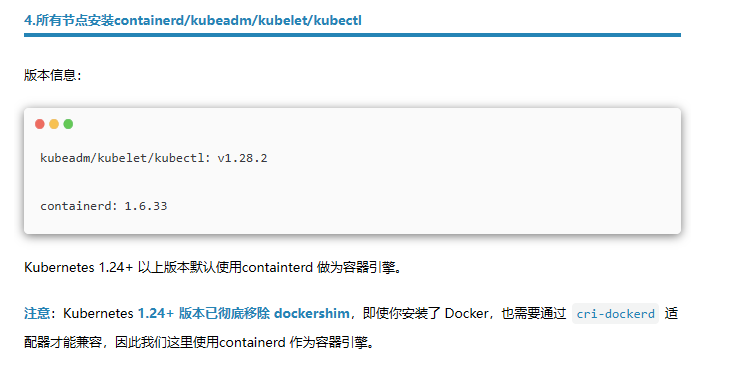

4.3 安装kubeadm,kubelet和kubectl

由于版本更新频繁,这里指定版本号部署:

$ yum install -y kubelet-1.28.2 kubeadm-1.28.2 kubectl-1.28.2

$ systemctl enable kubelet

$ systemctl start kubelet (由于没有生成配置文件,节点没有初始化无法启动,三个节点都安装完成之后,在其中一个master初始化之后启动,执行kubeadm init 语句)

$ journalctl _PID=<PID> 如果报错查看详情

- 部署Kubernetes Master 在192.168.234.131(Master)执行。

$ kubeadm init \

--apiserver-advertise-address=192.168.234.131 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.28.2 \

--service-cidr=10.1.0.0/16 \

--pod-network-cidr=10.244.0.0/16

## 执行完之后命令行最后会回显类似如下内容,在node节点执行即可加入到master节点:

kubeadm join 192.168.233.133:6443 --token uil7uf.b7g6vt6kcvzfeqnu \

--discovery-token-ca-cert-hash sha256:35add0964b670b4b2d8060ec5e055d5b4badc4e59ed79ecb639e276edc393ea1

说明:kubeadm init 会 pull 必要的镜像,可能时间会比较长 (kubeadm config images pull 可测试是否可以拉取镜像,如果加了 --image-repository registry.aliyuncs.com/google_containers,不会担心在国内拉取镜像问题)

注意: 如下执行如上命令初始化报如下错:

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[kubelet-check] Initial timeout of 40s passed.

Unfortunately, an error has occurred:

timed out waiting for the condition

This error is likely caused by:

- The kubelet is not running

- The kubelet is unhealthy due to a misconfiguration of the node in some way (required cgroups disabled)

If you are on a systemd-powered system, you can try to troubleshoot the error with the following commands:

- 'systemctl status kubelet'

- 'journalctl -xeu kubelet'

Additionally, a control plane component may have crashed or exited when started by the container runtime.

To troubleshoot, list all containers using your preferred container runtimes CLI.

Here is one example how you may list all running Kubernetes containers by using crictl:

- 'crictl --runtime-endpoint unix:///var/run/containerd/containerd.sock ps -a | grep kube | grep -v pause'

Once you have found the failing container, you can inspect its logs with:

- 'crictl --runtime-endpoint unix:///var/run/containerd/containerd.sock logs CONTAINERID'

error execution phase wait-control-plane: couldn't initialize a Kubernetes cluster

To see the stack trace of this error execute with --v=5 or higher

/var/log/messages 文件报:

failed to get sandbox image \\\“registry.k8s.io/pause:3.6\\\“: failed to pull image \\\“registry.k8

解决方法:修改/etc/containerd/config.toml 文件

1、要先containerd config default > /etc/containerd/config.toml

找到sandbox_image = "registry.k8s.io/pause:3.6"

改为sandbox_image = "registry.aliyuncs.com/google_containers/pause:3.9"

然后systemctl restart containerd

如果前面kubeadm init 命令配置有误,可以执行如下命令进行重置:

$ kubeadm reset --force

$ rm -rf $HOME/.kube/*

之后再次使用 kubeadm init 搭建集群

记得删除前面创建的 $HOME/.kube 目录,未删重新创建,会有如下报错:

Unable to connect to the server: x509: certificate signed by unknown authority (possibly because of "crypto/rsa: verification error" while trying to verify candidate authority certificate "kubernetes"

- 安装Pod网络插件(CNI)

# 在Master节点部署CNI网络插件(可能会失败,如果失败,请下载到本地,然后安装):

wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

$ kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

确保能够访问到quay.io这个registery。 master执行如下指令查看pod 状态

# kubectl get pods -n kube-system

- 加入Kubernetes Node

在192.168.234.132/133(Node)执行。

向集群添加新节点,执行在kubeadm init 输出的kubeadm join命令:

$ kubeadm join 192.168.234.133:6443 --token uil7uf.b7g6vt6kcvzfeqnu \

--discovery-token-ca-cert-hash sha256:35add0964b670b4b2d8060ec5e055d5b4badc4e59ed79ecb639e276edc393ea1

如果无法加入请检查:

1、检查firewalld,selinux,swap 等是都满足启动kubelet条件

2、有可能是 iptables 规则乱了,通过执行以下命令解决

1.回到kubernees-maser 依次输入列命令

systemctl stop kubelet

systemctl stop containerd

iptables --flush

iptables -tnat --flush

systemctl start kubelet

systemctl start containerd

2、在kubernetse-master重新生成token:

# kubeadm token create

424mp7.nkxx07p940mkl2nd

# openssl x509 -pubkey -in /etc/kubernetes/pki/ca.crt | openssl rsa -pubin -outform der 2>/dev/null | openssl dgst -sha256 -hex | sed 's/^.* //'

d88fb55cb1bd659023b11e61052b39bbfe99842b0636574a16c76df186fd5e0d

3.在kubernetes-slave中执行此命令重新join

# kubeadm join 192.168.234.133:6443 --token 424mp7.nkxx07p940mkl2nd \ --discovery-token-ca-cert-hash sha256:d88fb55cb1bd659023b11e61052b39bbfe99842b0636574a16c76df186fd5e0d

默认的token有效期为24小时,当过期之后,该token就不能用了,这时可以使用如下的命令创建token:

$ kubeadm token create --print-join-command

# 生成一个永不过期的token

$ kubeadm token create --ttl 0

9. 部署 Dashboard

9. 部署 Dashboard

kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v2.7.0/aio/deploy/recommended.yaml

默认Dashboard只能集群内部访问,需要修改service为nodePort类型,暴露到外部,执行命令将配置文件下载下来

wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.7.0/aio/deploy/recommended.yaml

默认Dashboard只能集群内部访问,修改Service为NodePort类型,暴露到外部:

修改这个文件

vi recommended.yaml

找到这段,增加红包部分,冒号后面有一个空格,千万要注意

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kube-system

spec:

type: NodePort //声明服务类型

ports:

- port: 443

targetPort: 8443

nodePort: 30001 //注意如果这里的nodePort写成nodeport或者存在其他书写问题,就会报如下的错

selector:

k8s-app: kubernetes-dashboard

$ kubectl apply -f kubernetes-dashboard.yaml

访问地址:https://NodeIP:30001注意:这里必须是https的方式,如果谷哥浏览器不能访问,谷哥有的版本没有添加信任的地方,无法访问,可使用firefox或者其它浏览器。创建service account并绑定默认cluster-admin管理员集群角色:

方式一:之后使用Token登录

# 创建新的 ServiceAccount

kubectl create serviceaccount dashboard-admin -n kubernetes-dashboard

# 绑定 cluster-admin 角色

kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kubernetes-dashboard:dashboard-admin

## 在 Kubernetes 1.24+ 版本,ServiceAccount 默认不会自动生成 Secret Token,必须手动创建。

手动创建 Token Secret:

cat <<EOF | kubectl apply -f -

apiVersion: v1

kind: Secret

metadata:

name: dashboard-admin-token

namespace: kubernetes-dashboard

annotations:

kubernetes.io/service-account.name: dashboard-admin

type: kubernetes.io/service-account-token

EOF

# 获取新 ServiceAccount 的令牌

ADMIN_SECRET=$(kubectl get secrets -n kubernetes-dashboard | grep dashboard-admin-token | awk '{print $1}')

ADMIN_TOKEN=$(kubectl describe secret $ADMIN_SECRET -n kubernetes-dashboard | grep "^token" | awk '{print $2}');echo"$ADMIN_TOKEN"

使用输出的token登录Dashboard。

方式2:使用配置文件登录:

########################################################################################

APISERVER=$(kubectl config view --minify -o jsonpath='{.clusters[0].cluster.server}')

cat << EOF > dashboard-admin.kubeconfig

apiVersion: v1

kind: Config

clusters:

- cluster:

certificate-authority-data: $(kubectl get secret dashboard-admin-token -n kubernetes-dashboard -o jsonpath='{.data.ca\.crt}')

server: ${APISERVER}

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: dashboard-admin

name: dashboard-admin@kubernetes

current-context: dashboard-admin@kubernetes

users:

- name: dashboard-admin

user:

token: ${ADMIN_TOKEN}

EOF

# 然后使用这个令牌创建 kubeconfig 文件登录dashboard

更多推荐

已为社区贡献1条内容

已为社区贡献1条内容

所有评论(0)