初次使用unsloth加载deepseek-r1遇到的问题记录

问题1:RuntimeError: Failed to find C compiler. Please specify via CC environment variable.如果 ping 不通,说明网络有问题,检查网络配置(如虚拟机网络、Docker 网络或代理设置)。如果 ping 成功,说明网络连接正常,可以继续排查 DNS 问题。至此解决此问题,并依次解决了问题1。

问题1:RuntimeError: Failed to find C compiler. Please specify via CC environment variable.

解决方案如下:

apt-get install --no-upgrade build-essential

问题2:执行问题1的解决方案再次报错如下:

Temporary failure resolving 'archive.ubuntu.com'

解决方案如下:

先看看主机的网:

ping -c 4 8.8.8.8

如果 ping 成功,说明网络连接正常,可以继续排查 DNS 问题。

如果 ping 不通,说明网络有问题,检查网络配置(如虚拟机网络、Docker 网络或代理设置)。

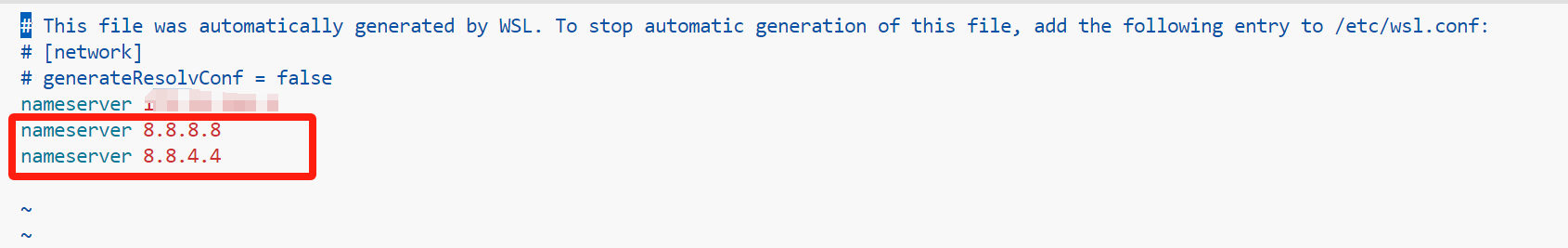

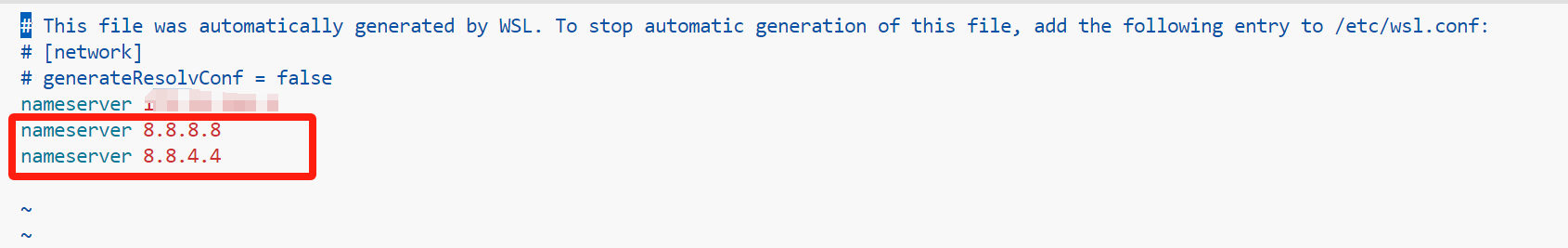

问题通常与 DNS 解析失败有关,手动设置 DNS 服务器: 编辑 vim /etc/resolv.conf 文件,添加以下内容:

nameserver 8.8.8.8

nameserver 8.8.4.4

验证 DNS 是否生效: 尝试 ping 或解析一个域名:

nslookup archive.ubuntu.com

ping archive.ubuntu.com

至此解决此问题,并依次解决了问题1。

问题3:

Unsloth 2025.2.15 patched 28 layers with 28 QKV layers, 28 O layers and 28 MLP layers.

Traceback (most recent call last):

File "/home/lzw/llm/demo/test.py", line 144, in <module>

trainer = SFTTrainer(

File "/root/anaconda3/envs/unsloth/lib/python3.10/site-packages/unsloth/trainer.py", line 203, in new_init

original_init(self, *args, **kwargs)

File "/home/lzw/llm/demo/unsloth_compiled_cache/UnslothSFTTrainer.py", line 917, in __init__

model.for_training()

File "/root/anaconda3/envs/unsloth/lib/python3.10/site-packages/unsloth/models/llama.py", line 2759, in for_training

del model._unwrapped_old_generate

File "/root/anaconda3/envs/unsloth/lib/python3.10/site-packages/torch/nn/modules/module.py", line 1799, in __delattr__

super().__delattr__(name)

AttributeError: _unwrapped_old_generate

解决方案如下:

FastLanguageModel.for_training(model) #加入此行代码,将模型转为训练模式

FastLanguageModel.for_training(model) #加入此行代码,将模型转为训练模式

model = FastLanguageModel.get_peft_model(

model,

r = 16, # Choose any number > 0 ! Suggested 8, 16, 32, 64, 128

target_modules = ["q_proj", "k_proj", "v_proj", "o_proj",

"gate_proj", "up_proj", "down_proj",],

lora_alpha = 16,

lora_dropout = 0, # Supports any, but = 0 is optimized

bias = "none", # Supports any, but = "none" is optimized

# [NEW] "unsloth" uses 30% less VRAM, fits 2x larger batch sizes!

use_gradient_checkpointing = "unsloth", # True or "unsloth" for very long context

random_state = 3407,

use_rslora = False, # We support rank stabilized LoRA

loftq_config = None, # And LoftQ

)但是你会发现在jupyter中却还是会报同样的错误,我想那是因为模型先进行了推理,然后进行了训练,这个前面的推理过程影响到了后面的训练过程,所以方法就是重新获取一次模型即可。具体如下,在下面第二段的代码前面加入如下代码即可。

model, tokenizer = FastLanguageModel.from_pretrained(

model_name = "/home/lzw/llm/demo/hugginface1", # 这里改成你本地模型,以我的为例,我已经huggingface上的模型文件下载到本地。

max_seq_length = max_seq_length,

dtype = dtype,

load_in_4bit = load_in_4bit,

)

model = FastLanguageModel.get_peft_model(

model,

r=16,

target_modules=[

"q_proj",

"k_proj",

"v_proj",

"o_proj",

"gate_proj",

"up_proj",

"down_proj",

],

lora_alpha=16,

lora_dropout=0,

bias="none",

use_gradient_checkpointing="unsloth", # True or "unsloth" for very long context

random_state=3407,

use_rslora=False,

loftq_config=None,

)

更多推荐

已为社区贡献1条内容

已为社区贡献1条内容

所有评论(0)